I have been fortunate in my career to serve a wide range of large businesses globally

across several complex industries, including retail, IT, telecommunications and entertainment.

While diverse in many ways, enterprises in these industries all can be classified as what

I call highly transactional businesses.

Whatever the products or services offered,

such businesses conduct commerce through many, many transactions.

Some run on a large assortment. Some sell consumables (read: high-frequency).

Others offer goods or services that drive very frequent customer interactions.

All share, in my view, a kind of operational complexity.

Whether because of complex supply chains, uncertain demand, changing competition,

high volatility or all of the above,

these businesses must confront and manage the operational complexity to survive.

One of my early mentors used to say (I am paraphrasing a bit here):

The keys to initial success in business are identifying a differentiable niche and forming a coherent strategy to offer products and services to that niche in a profitable way.

This always seemed true to me, and still does.

I would add, though, that the key to continued success is

to execute on that strategy in a manner that is highly aware, adaptive and resilient.

Highly transactional businesses, in my view, survive and thrive based on three key factors:

- how often decisions are made (and challenged) by data and insights

- how well they anticipate and quantify uncertainty in planning and goal-setting

- how well they instill a culture of awareness, adaptation and resilience in execution

In effect, my grandmother (and perhaps yours) might have asked in a simpler way:

How well do these businesses make lemonade out of the lemons that life gives then?

In my years supporting analytics, demand modeling, and price optimization systems for many of these firms,

I have had the opportunity to see firsthand how different enterprises around the world make both

strategic and tactical decisions. While difficult to rank or rate in any absolute sense

given the vast diversity of situations, I’ll be so bold as to say that I have observed in

these engagements what one might call a continuum of efficacy.

Put another way, I have seen what I would call good, great, and exceptional execution.

As no enterprise of this kind survives for too long making bad decisions repeatedly,

I have not seen many cases of poor execution!

I have been reflecting a lot lately on how to foster and nurture this culture of awareness,

adaptation, and resilience, especially for organizations that are large, complex and diverse.

With a nod to Tom Peter's classic In Search of Excellence: Lessons from America’s Best-Run Companies,

what makes these firms exceptional?

Any highly transactional business, by definition, has to make a lot of decisions.

Nearly all leaders must balance top-down and bottoms-up management thinking, delegating authority and granting autonomy

while still enforcing adherence to strategy and compliance on legal and ethical matters.

So what did I observe that made the difference?

It isn’t that, in my view, the exceptional businesses made more decisions. Instead, they made more impactful decisions, more quickly.

In the context of these highly transactional businesses, a more impactful decision tends to be a larger-scale decision.

Rather than make thousands of small, individual decisions about different parts of a product category or system in an attempt to “micro-optimize”,

the most exceptional firms, in my observation. form a strategy, set rules for tiering and assortment and let their systems cascade the "big" decisions

automatically to cover the full range in a predictable, logical way. At the same time, they are ready to adapt that decision as conditions change

with a deliberate and regular effort to gather data and insights that could challenge their original assumptions. The very best firms I observed

go even further. Knowing some of their assumptions will not hold, they make specific plans for what to do when (not if) demand doesn't match supply.

Instead of "dealing with it", they calibrate their financial assumptions with these contingency plans from the start.

Easy to say, and hard to do, right?

On the premise that culture must be framed by leaders, I started thinking about what individuals like me should do

to make more impactful decisions that are well-informed by data and insights.

We have only so much bandwidth on any given day,

so we must think about scaling ourselves if we are to scale our organization.

Taking lessons from my work with these clients across industries and geography as a start,

I then did some rigorous self-reflection on my own projects and programs, and on how I had driven the

decision-making and review processes. I analyzed areas where I was not happy with my performance or that

of my teams. With that introspection, I found some common patterns.

My assessment of performance through a complex project was far less about the number of surprises or setbacks;

but rather, more about the manner in which my teams and I put work and deliverables in order. For my projects, at least,

the riskiest and hardest to measure elements were all front-loaded in the program - regardless of whether it was

convenient or not. In these cases, we built trust in the data first and ensured there were rigorous and thorough acceptance tests before moving that

data into the larger systems where business users could review.

Putting all this together, I distilled my analysis into a framework that I call “Focus, Frame and Act.”

Capturing the best of what I observed from work with clients and the lessons from my own work,

I offer this in the form of five questions:

Focus, Frame, and Act

A Decision Optimization Planning Framework for Leaders

- What data do we need? What other data might we want? How do we acquire it all at the pace and scope that we need?

- What insights do we need from this data, so we know which things don’t matter?

- How will we successfully confront the full scale and velocity of the data as early as possible to front-load risk and avoid delays later?

- How will we ensure that this initiative drives "directional review", using data‑driven insights to quantify uncertainty and to challenge past assumptions and decisions?

- How will this initiative enable my team and me to scale ourselves to make fewer, more impactful decisions?

I am passionate about the art and science of decision optimization. I believe that innovation in this area - across both processes and systems - can enable individuals and organizations to be more successful, especially in times of rapidly changing business conditions. Powerful systems and tools to acquire data, to generate insights, and to inform key decisions are broadly available - at relatively low cost. I see a tremendous opportunity for organizations to increase their successful use of these tools to become more aware, adaptive and resilient in the face of volatility and uncertainty.

Keeping traditional design and development practices unchanged may not be the best path to success today.

Decision makers today need more data - from many more sources - and they often need it in real-time.

In the "Crawl, Walk, Run" methodology that most of us follow for enterprise software implementations,

we tend to start with a representative sample of data along with a concept of how to generate the insights and support decisions through process flows.

Stakeholders sign off on the prototype and then the design / implementation team takes the solution to scale.

Unfortunately, this last scaling step is often problematic. Misunderstandings about the nature of data at scale,

system performance issues, batch windows, infrastructure capacity issues

and other factors often do not appear until the very late stage of a project cycle.

Fixing these problems may involve eliminating data, simplifying models or cutting away some use cases just to “make it work.”

While reasonable in the heat of the project, these schedule compromises may reduce the value of the solution

greatly as deadlines, budget and pressures to deliver begin to weigh on the teams.

A hollow victory often emerges in these cases when the new solution is functional

but doesn’t provide much of the needed benefits.

Users lose enthusiasm or harbor much higher skepticism on the insights

after seeing issues when they appear late in the project.

While the teams counter these issues by planning enhancements in later versions,

the business overall may not recognize (much) value until much later after one or more of these (originally unplanned) updates.

In the worst cases, the organization may lose sight of the original goals as business conditions change.

With demand and supply volatility likely to be at historical peaks for some time,

the business conditions may change so significantly before a new system can be built and released that a "re-think" is required.

Consider this little axiom I have found to be true (it is a tribute to Yogi Berra for US baseball fans):

The longer it takes, the longer it takes

Delays tend to compound on themselves. Key premises or attributes of our prospective solution may only be valid

for a limited time. The root cause of a particular delay may indicate there are other effects that are not yet known. At a certain point, we have to re-think parts of the solution.

"Re-thinks" almost always means more delay.

Rinse and repeat.

We think innovation needs an overhaul.

Dmitry Kalinovsky/Shutterstock.com

So what to do?

One key defense against this problem in decision support and optimization problems is to focus on scale first.

Success can come faster by "front-loading" the data cleansing and scaling problems.

Rather than finding out a few weeks before a go-live that our storage infrastructure is undersized,

or that or our databases can't ingest data fast enough,

instead organize projects to prove the data is usable, accessible and clean - at scale - before we get into decision automation and workflow changes with users.

Run to Scale

If we make a small adjustment to our trusty "Crawl, Walk, Run" methodology,

we avoid the problems of trust in the outcome by building trust in the data and in our ability to deliver consistently first.

Such an adjustment doesn't have to mean postponing benefits or taking longer - far from it.

We can employ real-time data streaming and semi-structured data handling techniques to deliver insights quickly.

As the rest of the project moves ahead, increasingly valuable insights emerge as the system comes together.

When users have experience with the real data early, we focus our trust and consensus-building on the system, the decisions and the outcomes.

Without trust in the data, users will not embrace a new system. If users lose trust late in the cycle,

the effort and time to regain that trust compound to create (longer) delays in the program. Why take unnecessary chances?

Adding resources, focus, and checkpoints early for data quality and scale will make the project more likely to deliver on the planned benefits when needed.

In the most complex cases, you may want to consider bringing in a specialized data integration team to coordinate efforts with vendor and client.

Consider in your next project how can "run to scale" and help users benefit from new data sources even ahead of the rest of the system,

while de-risking your schedule and building trust in the outcomes to follow.

Explore how you might deliver reporting or intermediate insights "for real" to drive closure sooner on the quality and scale validation.

Look at integration opportunities with other systems that are low-risk and valuable to stakeholders -

areas where your newly packaged data or partial implementation can help solve related problems, again while building stakeholder trust.

You may want to consider sharing data quality monitoring with stakeholders to learn about other "hidden problems" that might exist elsewhere in the landscape, avoiding future problems.

Focusing on data scale and quality early will not only ensure you deliver value earlier, but also that you reach the final integration phases sooner as users have more time to build confidence in the supporting data.

At 3 Leaps, we help highly transactional businesses to automate, scale and speed decision-making.

Follow us on Twitter or LinkedIn,

or check out the rest of our blog posts.

Tomorrow may not be like today

This statement has probably never been more true in the modern era.

Successful decisions must be informed by data in real-time.

Trends may start and end in weeks or even days.

Demand shifts may occur in weeks or even days with “the new normal” driving radical changes in our expectations of the regular seasons in business.

Opportunities may arise in unexpected areas. Excess supply for one market may be “just enough” for another.

The retail customer may be more open to purchase different goods and services from brands she trusts or from new brands that have a compelling offer.

Firms may consider new suppliers when availability or fulfillment problems arise.

Competitors may not be paying attention to the changing environment,

creating frustrated customers willing to try new things and to work with new suppliers.

I believe that now is the time to expand the use of real-time data in decision optimization.

Some industries such as payment, hospitality and ticketing are literally built using real-time data.

Other industries leverage such data for real-time inventory display, pricing updates or rate changes.

In many cases, though, such data may have a narrow (if important) use in the customer-facing web properties.

There is much more opportunity, however, to help us resolve potentially conflicting signals

as we steer through the mist that volatility often creates.

Fortunately for us, key trends in real-time fraud and anomaly detection have driven enormous investment in the tools and technologies

needed to make real-time data accessible and useful.

A Global Market Insights report published in August 2019

sized the market at USD 20B in 2018 - growing to US USD 80B in 2025.

The investments in this space are immense; entire solutions have arisen in the last few years around the problems of real-time

data acquisition, filtering and analytics. Since most fraud detection systems operate live and “in the transaction,”

the tools and technologies that support the industry have grown to handle data at a truly immense scale,

with decisions leveraging the data made in seconds - or even milliseconds.

Thanks to these developments in the information security and fraud detection spaces,

we can deploy solutions based on proven technologies that - almost by definition - are operating successfully at scales far greater than we would typically need.

A highly transactional business by definition conducts business through hundreds of thousands or millions of transactions over a reporting period.

Are analysts and leaders assessing these interactions on a daily (or hourly) basis to spot potential supply problems or changing trends?

We are often conditioned to filter odd results and anomalies using the “dirty data” explanation.

The inventory system shows stock or capacity available but no sales occur in a peak period.

At best, we might investigate this as a “front-stocking” problem where the goods or services are not accessible to the customer.

At worst, we might ignore the unexpected signal as a blip on the premise that we “wait for a later review.”

But what if the sales data are accurate? Is there unexplained loss or wastage? Or has demand suddenly shifted? What if customers simply didn’t show up?

Complex businesses are often in a bit of what one might call a “decision fog” - making decisions with incomplete or imperfect data.

Adding a wide array of data sources that complement one another can help firms navigate through the mist.

Consider some possible cues that could help in our lost sales example above:

- Site entry/exit data (physical or virtual, as appropriate)

- Foot traffic/impressions

- Stock movement

- Abandoned sales

- Mobile (person) location data

Consider what we might call situational awareness. In the face of conflicting indicators, what other data are available to tell us what is happening on the ground right now?

In the "conflicting signals" example above, we probably won’t know the root cause of our anomaly.

If we can use cues such as mobile location or site entry/exit data to confirm the presence or absence of customers, however,

we can form a better hypothesis and take action more decisively.

With a central “decision console” that brings these data elements together,

leaders can make inferences and probabilistic judgments faster to help the firm keep moving in the right direction.

Whatever the situation, periods of high volatility in supply and demand require us to take some definitive action to calibrate and adjust our decisions.

I like to think of real-time data like a wonderful street snack: hot, fresh, and available just when and where we need it.

Building a comprehensive picture using observations from all the areas available can give us confidence to make better decisions quickly.

While we can also use this data to develop insights through more sophisticated modeling (a topic for another day),

our decision console would emphasize live data with minimal processing.

Let’s recap. We are in a decision fog exacerbated by high volatility in supply and demand.

Our models have conflicting inputs and may generate recommendations that are very different compared to period norms.

Even worse, poorly designed models may be unstable meaning the results vary widely and wildly as inputs change slightly.

Our best defense in the heat of uncertainty is to enrich our situational awareness.

With a wide range of signals to help us choose the best alternative in the face of conflicting or inconsistent data,

we can move beyond the inaction often fostered by the assessment of “dirty data” or “weird results”.

To build our decision console, we first need to make an inventory of the data we have, as well as data we must acquire from new sources.

Then we need to acquire and stream the data, using classification, clustering, filtering and other data science techniques as needed.

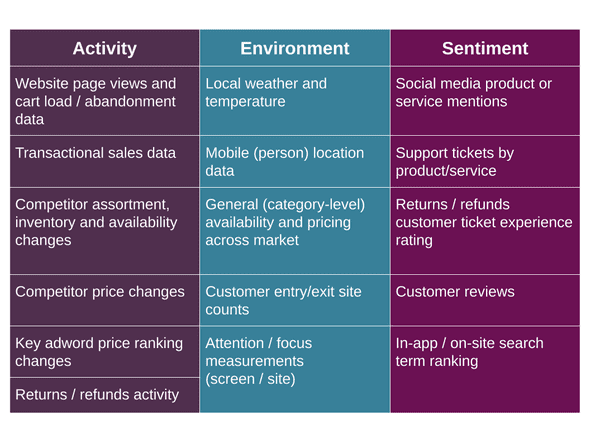

Here is an example of such an inventory as we might apply to an omni-channel commerce business in retail, food service or entertainment:

In such a decision console, there is no one data element that stands above all others.

Instead, it is the complete picture on one screen that helps us to confirm or to refute a hypothesis quickly and to make a decision.

When conditions are rapidly changing, we must reassess frequently. As I’ve detailed in another post,

I believe it is critical to build a decision culture where we not only review our decisions as conditions change

but also build a process to systematize such reviews.

Incorporating more real-time data in decision-making is not a substitute for building and using sophisticated data models - not by even a little.

From my perspective, decision-making should be thought of in terms of navigating and steering.

Our strategies set our course, and our data models help us navigate the best course towards that destination.

Adding real-time data into a decision console can help us steer around bumps in the road ahead

as we navigate through the fog and will help us see that proverbial “Road Closed” sign sooner,

so we can adjust our course and get to our destination on time and ahead of the others who also want to serve our customers.

Winning the trend means spotting the trend early with eyes and ears attuned to the activity, environment and sentiment data around us. Especially in times of high volatility such as now, winning the trend means driving a disciplined, consistent process that encourages regular decision review and a deliberate and regular effort to gather data and insights that challenge original assumptions. Use real-time data to cut through the fog and make the most informed decisions possible when the path forward is not clear.

I came across an excellent article from last week on price elasticity for gasoline consumption from Lutz Kilian and Xiaoqing Zhou, two economists in the research department at the Dallas Fed.

See this link for the piece, which is a quick read that really got me thinking. If you are in retail or involved in pricing, I’d encourage you to read this - twice even. I was actually surprised to see that the conventional wisdom on fuel consumption assumed that short-term demand was essentially inelastic to price. For those who are not pricing nerds like me, this basically means that economists say people will buy essentially the same amount of fuel in the short-term even if price changes, and that demand shifts only occur over much longer time scales where folks might decide to buy a more fuel efficient vehicle or switch to some other mode of transportation. One can see this conventional wisdom rooted in statements like “people have to commute to work, so they will buy fuel even as price changes.”

Digging deeper into the assertions the two economists make here brought me some insights that I think may be helpful for other kinds of demand modeling.

So what is the big deal about this discrepancy in demand?

As I was reading the article, I thought about being in the car with my dad as a kid during the 70s.

My father always had (and still does have) an encyclopedic knowledge of the local gas prices, from the times when a local Spur station had regular at $0.18 USD per gallon (circa $0.05 USD / liter) while the competition might have been at $0.26 USD (circa $0.07 USD / liter, 44% higher).

A quick call to him refreshed me on his mental decision tree, timing and shifting fuel purchases so if necessary he could drive a bit further to get cheaper gas (fuel).

He also reminisced on the call about his mother making his father stop when traveling only at stations that sold Green Stamps - an interesting testament to the power of loyalty programs that is 75 years old.

While some of my dad's tactical purchasing was probably driven by weekly or monthly budgeting, I recall him saying things like “for 10 cents a gallon, it is worth going 5 miles further” (obviously with the numbers changing depending on fuel price).

I remember him only putting $5 in the tank if he had a low fuel level and had to buy at a more expensive location, just to be sure he had enough to fill up later at a less expensive location.

I didn’t know what price elasticity was at the time, but it sure seems like the fuel purchase decision was highly elastic to price for my father at least.

This turns out to be an important lesson, though I didn’t know it then. As we will see shortly, we can (and should) model demand separately from modeling specific purchase decisions.

Doing so can unmask some of what we call “aggregation bias”, where we might draw conclusions on individual behavior based on the results of the group.

How, you might ask? What if your model doesn’t consider localized variations in price? Taking a monthly average or using an overly wide geographic estimate might blur the effect of individual purchase decisions.

Consider what happens if one were to use monthly prices instead of daily prices. Maybe I am watching my spending (like my father did in planning those fuel purchases).

Maybe I can’t wait two weeks to buy fuel, but I can wait two days. Maybe I will postpone some trips (again for a few days) until I buy fuel later.

Or, maybe I drive across town to fuel up at a cheaper location.

Over the measurement period, I bought gas, and so we see that demand evident in the data.

If the pricing were blurred by averaging over a month, we might not detect that I either waited for prices to drop for a few days (temporal blurring) or drove a bit further (spatial blurring).

To a model built on aggregated data, I “bought like the mean” (not precisely correct, but close enough to illustrate the point).

At a high level, it looks as though the demand response is relatively insensitive to price changes.

This is wrong, and we can learn from the mistakes here to ensure our models are better.

If you work in data science or economics around pricing or demand modeling, I’d encourage you to read the articles referenced in this piece.

As an example, a study by Levin et al referenced from the article is a powerful reminder of the importance of using data that is appropriately granular.

The study shows how daily pricing fluctuations are much higher than would be caught by a simple smoothing technique such as a period average.

This is not a minor or trivial conclusion, as I suspect anyone charged with pricing can assert. Pricing is tactical as much as strategic. While the movements over time may average out, it does not follow that we can just “zoom out” and look at behavior over longer time periods.

Such smoothing or averaging is analytically risky (in my view) wherever someone can delay (for a short period) or alter a purchase decision (i.e. go to a different place) easily.

If we aren’t measuring closely enough, we will miss the actual decisions that consumers make in the blur of averaged prices and purchases made within a larger locale like a city.

In this same study, the authors noted a very strong “within-week” pattern for fuel purchases.

I can imagine a headline quoting what they said in the article: “...consumers buy roughly 17 percent more gasoline on Fridays than the daily average and buy 15 percent less on Sundays than the daily average…”

On the surface, the conclusion is interesting, but digging deeper alerts me to another potential fallacy relating to data depth.

In this case "purchase amount" blurs the transactional frequency and the per-transaction expenditure in favor of only total spend.

A consumer might reasonably decide to buy less at a moment in time (like my father only putting a few dollars worth in the tank until he could find a cheaper source later).

The Levin et al study concludes that expenditure-per-transaction doesn’t vary nearly as much as the transaction frequency for "within-week" analysis.

Put another way, most of the variation by day in total expenditure comes from the frequency of transaction, rather than from buying more or less in a transaction.

You might not notice this if you were not careful in your model to analyze both the frequency of transaction and the amount-per-transaction, and in the same line of reasoning you might miss the opposite result for the same reason in different situations.

Blurring to “total expenditures” could (and probably would) create another aggregation bias which could mask important insights about demand, hiding the true number of purchase decisions and the intensity or propensity-to-buy for each.

As if this were not enough, then consider the problem of different grades. The Levin et al study asserts that about 15% of the fuel purchased in the US is for a higher grade than standard.

How might the choice of grade vary with price or price delta? Without considering the specifics of each transaction, we'd lose this in the blur of averages.

Maybe consumers (in the US for this study) buy fuel in relatively the same amounts each time, at the same grade. We can’t just assume this to be true for every situation.

By considering transactional frequency, basket size, and product choice as separate (if not completely independent) variables, we get a much clearer picture of how, when and where the purchasing happens.

How much you care about these insights depends on whom you are in the food chain of fuel distribution and marketing.

If you are the one selling fuel on the street, I can guarantee you that it is important to understand these variations since you win or lose at a specific location based on what happens each day.

Using the economists’ scaling (which is different from what I see as typical convention for retailers - results in the end are the same), an elasticity of -1 means for every 1 percent increase in the price of fuel, consumption falls by one percent.

A value of 0 means that a change in consumption does not occur in response to a change in price.

The researchers at the Fed who wrote this piece I referenced note several sources that estimate elasticity for fuel in the US at between -0.01 and -0.08, or nearly inelastic demand.

Several recent studies quoted by the Dallas Fed researchers covering the US and Japan using much more granular data concluded the elasticity was in a range of -0.27 to -0.37.

If you are a pricing or economics wonk, your jaw may be hitting the floor as you read this.

If not, let me just say that this is an enormous difference - on the level of the difference between ice cold and pretty warm.

From my days serving convenience and fuel retailers, I know how seriously the retailers take pricing of fuel.

The frequency and degree of price changes that most retailers make, with high variation across outlets, tell me that they knew already what these studies confirmed - local and day-over-day differences present a very different picture than a broadly summarized model would.

Were we to make the same mistaken assumptions in pricing for other goods and services, we would be fighting a trend and probably driving the wrong strategy for those products.

Coming full circle to those trips with my dad when I was learning about price elasticity without even realizing it,

I’m making three mental notes for my next demand estimation and modeling exercise.

Remembering how he would decide whether to drive a bit further or not, and whether to wait a few days or not, for his next fuel purchase, I can see a real example of aggregation bias which I didn’t even know existed.

This lesson of misunderstanding fuel price elasticity should be a reminder to us of the dangers of aggregation bias.

If my fuel retailer colleagues had taken these incorrect assumptions to heart, they’d have been losing their shirts to the competition who were (correctly) treating pricing as both tactical and strategic.

Here’s how I am summarizing my takeaways, as good reminders:

Acquire data at the transactional level wherever possible, and in any case do not aggregate too highly when blending in other data.

For most goods and services, I’d say daily aggregation would be a good tradeoff for comparison with other data sources. Less frequent (i.e. higher interval) purchases can probably use higher aggregations, while commodities and financial assets would likely need intra-day.

Use high spatial, channel or geographic resolution so you capture the tactics consumers might use to get a better deal by choosing a different source.

Even better, see if it is possible to model the additional effort or cost of using a different source as a possible secondary, differential. or "up-sell" demand indicator. For retail goods, you would want to consider a level below MSA (in the States), postal code or other locale - think about how to understand “intra-city” or “cross channel” travel that might be lost in aggregated purchase statistics. Even “intra-business” differences might be important, such as a customer choosing a different fulfillment method or visiting a different format (hyper vs local store).

Determine how to model differences in purchase quantity over space and time, especially for goods and services where it is possible to buy more or less because of an ability to store.

We’ve all seen the near hoarding of paper products during COVID-19 as the most extreme example of “pantry loading”. How much our customers buy when they do make a decision is also very important, particularly when they have a lot of control over timing.

If economists can (apparently) estimate fuel price elasticity incorrectly for decades, then I think we can all agree we might have other similar problems in our own respective models. I’m taking an extra look now at aggregation bias in everything I do, thanks to the wisdom of my father and some very good recent work by economists who dug deeper in the data to uncover a rich variability previously hidden by averages.

I’ve seen a number of reports in the business press over the last few days about the economy showing signs of recovery.

I am seeing this firsthand from a different perspective at the moment. My wife and I are on a 5000-mile (ca. 8000-km) road trip odyssey

running from South Florida to coastal Texas and then on to Boston, as

we help one of my sons relocate to start a new chapter in his life and career.

Preparing for this trip, I was not exactly sure what to expect along the way.

Purely from anecdotal observation as I write from the passenger seat of a moving van in the second of three legs in the journey,

I can confirm that the US (at least what I can see from the road) is indeed awakening.

There are more people on the road than I thought there would be, and it has been great to see people moving around.

Here’s what I’ve learned so far.

- The highly transactional businesses we’ve visited in retail, hospitality and food service are adapting

We had a lovely dinner in Friendswood, Texas (south of Houston) a few days ago with my family at the Baybrook location of Whiskey Cake.

This is a favorite of our Texan twenty-somethings, and I was impressed by how they have adapted to the COVID-19 recovery.

Our server brought out a handheld device with a giant QR code on the screen, letting us know that the restaurant no longer used physical menus.

There was a bit of theater as she went around helping each person scan the code to bring up the menu on our respective mobile devices, taking drink orders along the way.

While this process might slow things down when the restaurants are all back to peak capacity on weekends, the connection provided an excellent way to get everyone engaged and to reinforce that concept of “cleanliness theater”

that I first read about in this article by Larry Mogelonsky.

Digital menus are great for a lot of reasons (lower costs, easier to change items or run promotions, etc.).

Here I think the firm used the concept of an e-menu and drove the change over the “acceptability threshold”

by having the server explain that this was better for everyone from a cleanliness perspective.

They were simply using the QR code to direct guests to the menu available on their website; no doubt they could add more functionality as time progresses.

In this case, what impressed me was how they took the opportunity to eliminate a potential cleanliness issue and make a process change for convenience at the same time.

They saw the opportunity to “sell” the idea of an e-menu by having the servers explain the advantage of this new option.

In a different visit for takeout to Hearsay on The Strand in Galveston,

we found an excellent layout for diners with well-spaced tables and lots of little cues to reinforce the focus on cleanliness.

Their website actually calls out the steps they take and confirms the current (for the locale) limits on capacity.

I arrived to pick up my takeout order and realized I needed to make an addition.

The hostess very politely informed me that I could not actually touch the menu while making my new selection,

but that she was happy to set the menu out for me. Everyone kept their distance, and the staff was wearing masks and gloves that were color-coordinated.

While I am anything but a fashion expert, I think this detail was a small sign to diners that management is taking the precautions seriously.

Through about 1900 miles (ca. 3000 km) of the journey so far as I write this from the passenger side of a moving van, nearly every single employee in the grocery stores, restaurants, fuel stations, travel stops and hotels we’ve visited were wearing masks.

I cannot recall a single case in food service where the staff and facilities were not in order.

Will this be a permanent fixture? Honestly I hope it will not be necessary.

But it is good to see that business owners are taking the steps needed to meet the requirements of the present,

so we can get back to a more normal activity level.

- There is work to be done in contactless payment

I’ve found using contactless payment to be a frustrating process over the last few years.

In one trip to London a while back, I decided I was going to use my watch (contactless app) to pay for the cabs exclusively when not on the Tube.

I’d say about one out of four tries worked, and at some point I realized I was holding up the drivers or new passengers by persisting.

My experience using a phone has been better, but even the phone payment process is still not ready for prime-time, in my view.

Several of the large oil company brands (“flags” to many in the petroleum industry) are supporting contactless readers in fuel dispensers now,

but I’ve not been able to use the "at-dispenser" options with the cards and devices I have on the trip.

I am experimenting with the ExxonMobil and Shell mobile apps and will update the post with the results.

In fairness, ExxonMobil has added messaging on their website about increased convenience and decreased pump contact

(see ExxonMobil Rewards+ App

.)

Update 2020-05-28 from about mile 3000 (ca. 4800 km) in the trip: Contactless payment worked at Sunoco via an NFC reader on the dispenser.

I successfully used my card in a contactless transaction at a Sunoco in Pennsylvania enroute to Boston.

Ironically, the second attempt was blocked by my bank due to fraud detection as I went from the moving van to our truck.

That required a card insert, but this is not Sunoco's fault.

Given the relatively low frequency of multiple fueling transactions at a single stop, the benefit of fraud detection blocking may outweigh the convenience benefit. Credit to Sunoco and their suppliers for getting this right!

I'll report next on the other apps as mentioned.

Update 2020-06-02 from about mile 4600 (ca. 7400km): I successfully used the ExxonMobil Rewards+ to authorize a pump in eastern South Carolina from the mobile.

I had a problem earlier in a different station in Massachusetts but used NFC contactless there.

The application uses location effectively to identify the station and enables you to select the dispenser and authorize from the phone.

In this case, at least, no extra location codes or barcode scanning was required.

Congratuations to ExxonMobil and their partners for a very good experience.

The locations need to do more to promote this, as I suspect it would increase loyalty as people look to reduce physical contact with frequently touched payment terminals.

My wife and I were talking about the stress of shopping during a pandemic on the way over to Texas

as I was trying to convince her to put the contactless payment applications on her phone.

She had a perspective about this that had not occurred to me.

When I am at the grocery store picking up lots of different things, touching grocery carts and, in general, trying to navigate social distancing, the last thing I want to do is pick up my phone and use it for payment. I will just have to sanitize it when I get home.

In general, the lack of contactless payment is the biggest “miss” I see on this current road trip. Most stores, restaurants and hotels we have visited don’t have contactless payment options. In the few cases where we have seen the option, the establishments don’t have the marketing and messaging to inform the shoppers. Has there ever been a better time than now to drive behavior changes in payment towards contactless, as consumers all thinking about ways to stay safe? Incidentally, my younger son did convince my wife to install Samsung Pay on her Samsung watch while we were together, so hopefully I will have some real-world experiences to observe before our trip is complete. The obstacle to my field testing will be finding businesses on our trip where contactless payment is available.

We’ve had excellent experiences on this trip with hospitality, having stayed in two hotels and one home marketed by Airbnb so far. For the Texas leg of our trip, we rented the (Airbnb-marketed) home to help our son pack up his apartment. Airbnb had a lot of messaging about cleanliness on their website and based on my experience probably has coached their hosts on presenting the same. This emphasis on cleanliness seemed higher than the last time I searched the site (pre-COVID-19). In the current environment, Airbnb and similar booking sites have another advantage in contactless payment for convenience and safety. We booked the trip with our credit card online before leaving and so had no card handling (high marks from my wife even if normal process). While the booking process hasn't changed, we saw more messaging about the "contactless experience" - another good example of highlighting a potential differentiator in service.

While online payment is a normal aspect of the booking process for companies like Airbnb, it highlights an area on which I think the hotel industry could focus. Most hotel chains have a prepayment option that provides a small discount as an incentive. The discount is nice, but we find that we are not always confident enough in our exact plans to pay ahead of time. Why can’t we replace the card swipe / insert at the check-in desk with a confirmation triggered from the mobile app? There are complications because of the “pre-auth process” used at check-in (verify card now, settle payment later when total is known). I would argue that now is the time to deal with these issues and to innovate on new ways to communicate with guests who are thinking about minimizing contact.

- Customers are thinking a lot more about cleanliness and contact, creating opportunities for new initiatives and messages that are relevant

My wife, who paused her real estate career in early 2019 to focus on supporting our family in other ways, continues to serve as Head of Procurement and Head of Health Services for the Thompson Family (among many other responsibilities.) Enforcing a strict regimen of masks, hand sanitizer and social distancing that would make Dr. Birx extremely proud, she leads the way in our family to ensure we make this long trip safely. We are not alone here. The chatter with family and friends often centers on these topics of health and safety. Not meaning to imply any gender bias in this next observation, I’ve watched numerous women steer their husbands and children around other groups of people to keep social distancing in the shops and stores we’ve visited from Texas to Florida and then back into Mississippi so far.

Do we see everyone wearing masks? No, we do not. However, many people - even most people in some cases - are doing so. People are more aware and, if our lessons in marketing hold, more willing to consider changing brands or products to fit their new areas of concern around cleanliness and contact. If our front-line businesses focus on these areas in serious and visible ways, I think they will add customers through word-of-mouth recommendations from current customers. On the other hand, if they ignore these changing concerns, others will earn the business as customers switch.

Coming out of a Home2Suites by Hilton in Mississippi earlier in the trip, my wife summed up the stay this way:

This place was really clean. I could smell the bleach on the linens and that made me happy.

These data are not going to show up in traditional historical reporting for some time. Now more than ever it is vital to use the immediate, real-time data we have available to us in our business and in our ecosystems. Only by looking at what is happening on the ground right now can we see how things are changing. Transactional data, customer call center transcripts and recordings, interviews with managers and employees in the field, focus groups, social media sentiment and supplier data can signal the changes that are happening, if we commit to analyzing the data and using the insights to challenge our current plans and assumptions.

Winning the trend means spotting the trend early with eyes and ears attuned to the activity, environment and sentiment data around us. Those who do so first will recover and prosper in a rapidly changing environment. Knowing the economic devastation that we have faced across our economy, I am happy to see on this trip real evidence of the creativity and innovation that our front-line businesses are showing.

Here’s to a safe and prosperous awakening for all!